A global industrial automation manufacturer maintained its product catalog in a legacy product information management system. The data stored there was structured for operational purposes, not customer communication. Field names were abbreviated, descriptions were terse or absent, and the format reflected decades of data entry conventions rather than any coherent content strategy.

The organization was modernizing its web presence and needed customer-facing product descriptions that were clear to customers, technically accurate, and usable on the website. The team could not write hundreds of thousands of SKU summaries by hand within the schedule or budget.

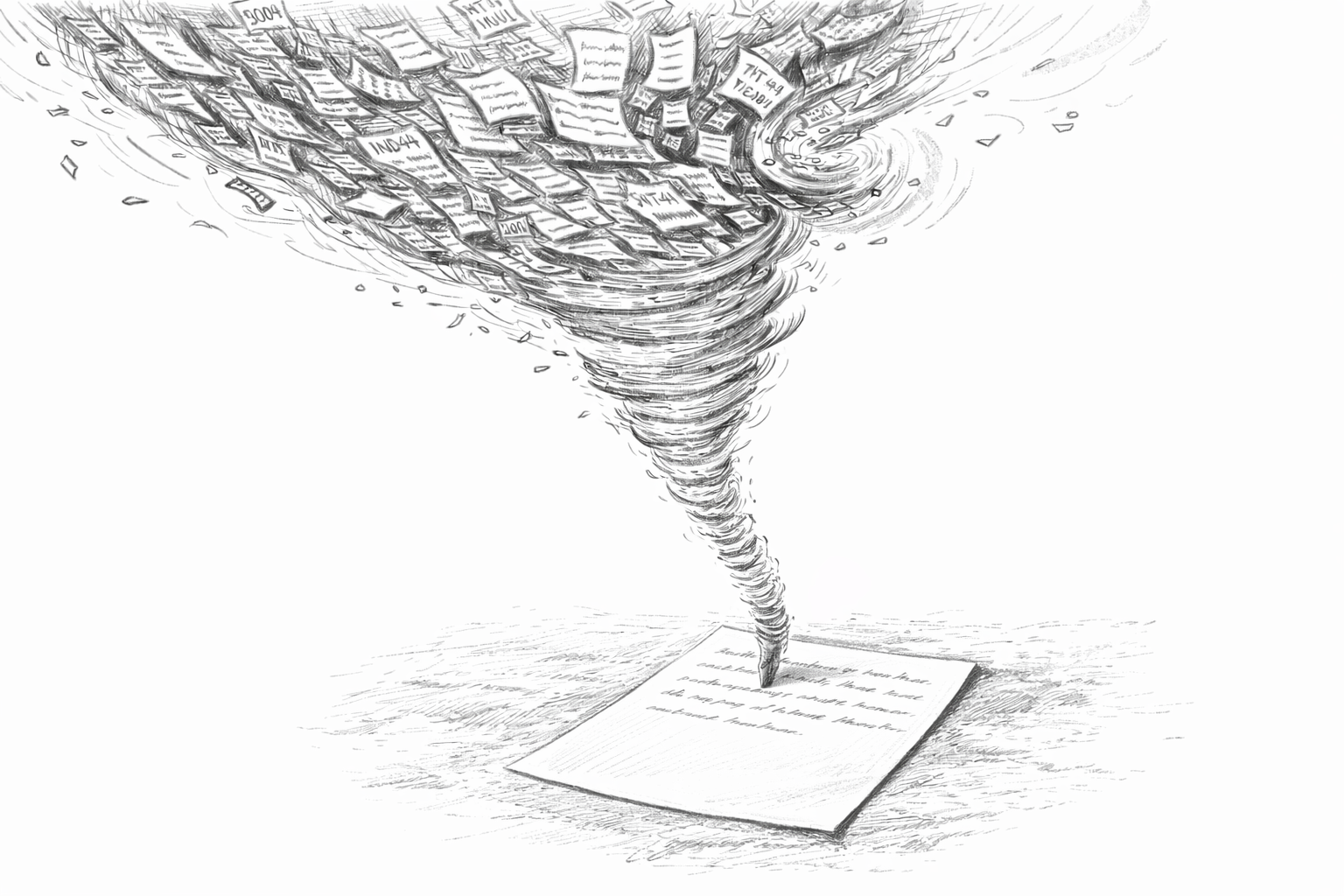

PiSrc used AI-powered summarization to generate product descriptions from the raw data fields available in the legacy system. The model took in each product's structured attributes (specifications, classifications, application codes, dimensional data) and wrote a web-ready summary of the product's purpose, main features, and relevant specifications.

The work went beyond tidying up old database fields. The AI needed to interpret abbreviated field values, infer relationships between attributes, and write descriptions clear enough for non-technical buyers but precise enough for engineers.

Because these were technical products, the descriptions had to be accurate. A product description that overstated a voltage rating or mischaracterized an operating temperature range could create safety risks in actual use. PiSrc added safeguards to reduce that risk.

Output constraints. The AI was configured to generate content only from the source data provided for each product. It was explicitly prohibited from inferring specifications not present in the input data or drawing on general knowledge to fill gaps. If a data field was missing, the summary omitted that attribute rather than guessing.

Factual anchoring. Every claim in the generated summary was required to trace back to a specific field in the source data. This made review efficient because reviewers could verify each statement against the input record.

Terminology control. A controlled vocabulary ensured that product categories, safety certifications, and technical terms were rendered consistently and correctly across all generated content.

Mandatory review workflow. Auto-publish was disabled. Every generated summary entered a review queue where subject matter experts could approve, edit, or reject the content before it went live. The workflow tracked revision history and approval status, providing an audit trail.

Escalation paths. Summaries flagged by the system as low-confidence (due to sparse input data or ambiguous attribute values) were routed to senior reviewers rather than the general queue.

The AI summarization pipeline produced draft content for the full product catalog. The review pass rate on first submission was high, with most summaries requiring only minor editorial adjustments rather than substantive corrections. The constraints and review step helped keep unsupported claims off the published pages.

The project finished much faster and at a lower cost than manual copywriting would have allowed. The workflow also gave the team a process they could reuse : as new products are added to the catalog, they enter the same pipeline automatically.

The project showed that generative AI can help with technical product copy if it is tightly constrained and reviewed by people. Their takeaway: let AI draft the copy, but have people review it before anything gets published.