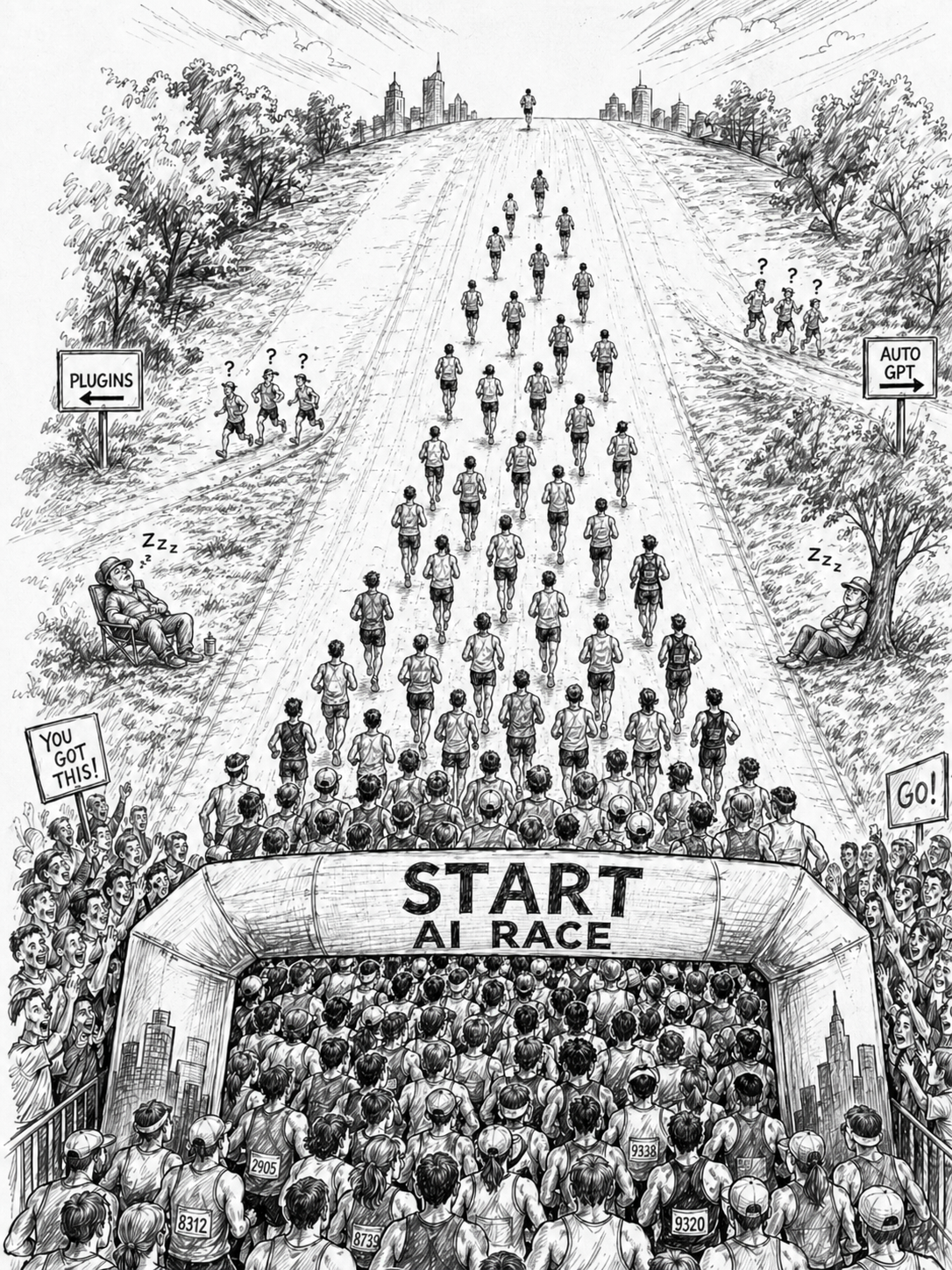

The AI landscape rewrites itself every few months. In four years the industry has moved through four distinct eras: from raw LLM chat, to retrieval-augmented generation, to formal guardrails, to agentic systems that plan and use tools. Each era recast what a serious enterprise deployment looked like. Each was punctuated by major model releases that shifted what was possible at every layer. The next era is already underway.

The organizations that win in this market are not the ones that bet correctly on a single architecture. They are the ones whose systems can absorb each shift as it arrives, adopting new capability on the timeline of the technology rather than the timeline of a rebuild. That capacity is engineered. It comes from disciplined testing practices borrowed from software engineering and applied to AI components with the same rigor: accuracy tests on known cases, regression tests against every change, and adversarial red teaming for unsafe behavior. Together these practices produce the confidence that lets a team adopt a new model in days, not months.

We call the principle festina caute: make haste carefully. Speed is non-negotiable in a market this fast, but recklessness in production AI is expensive. The reconciliation of those two pressures is engineering discipline, and it is what keeps a deployment fresh release after release.

A new frontier model lands every few months. Each one shifts what is possible: better reasoning, longer context, lower latency, cheaper tokens, new tool-use patterns, native multi-modality. The capability that defined a state-of-the-art deployment a year ago can sit a tier below what a current-generation model delivers out of the box.

The pace of change is not just about model releases. The architectural patterns built around the models have been changing just as fast. In four years, the industry has moved through four distinct eras, with the next already taking shape.

The first was raw LLM chat. ChatGPT broke through in late 2022 and the operating assumption was that a sufficiently capable language model could answer most questions on its own. The deployments that came out of this era were chatbots wired to a system prompt and not much else. Hallucination was a known problem with no agreed solution. Enterprises piloted, marvelled, and mostly held back from production.

The second era was retrieval-augmented generation. Through 2023 and 2024, RAG became the differentiating pattern for grounding model output in proprietary content. Embedding pipelines, vector databases, and retrieval orchestration filled a real gap. For a stretch, RAG defined what a serious enterprise AI deployment looked like.

The third era was guardrails and governance. As production deployments began to face real consequences for unsafe or off-brand output, the industry moved from informal prompt engineering to formal output constraints, structured generation, content policies, and review workflows. Many of the deployments described in The Agentic Enterprise were built in this period, with explicit guardrails as a first-class part of the architecture.

The fourth, current era is agentic. Models now plan, use tools, and execute multi-step workflows under governed boundaries. Retrieval becomes one capability inside a broader orchestration rather than the architecture itself. The Model Context Protocol introduced by Anthropic in November 2024, now governed by the Agentic AI Foundation, has emerged as the integration standard for tool use across providers. Agentic deployments routinely produce better results than the RAG-centric architectures they replaced, with more flexibility and less custom code.

The shifts will not stop. The leading edge will move again, and again, and again, before this paper is twelve months old.

Make haste, carefully. Speed is non-negotiable. The market moves at the cadence of model releases, and waiting is a strategic loss. Recklessness is also non-negotiable, because AI systems can fail in ways that look fine in a demo and surface as a production incident a week later. The reconciliation of those two pressures is engineering discipline. Specifically, it is a test infrastructure that lets you adopt new capability the day it becomes worth adopting, with evidence rather than hope behind every change.

The startup world adopted "fail fast" as the operating principle for early-stage product development, where the cost of being wrong is low and the cost of being slow is high. Production AI is a different setting. The cost of being wrong can be a privacy incident, a regulatory finding, or a customer-facing hallucination at scale. The principle has to evolve with the stakes. Festina caute is the version that fits the current moment.

For every architectural shift that becomes part of the standard stack, there are approaches that drew significant investment and then quietly faded. The pattern is informative because it shows how easy it is to over-invest in a particular technique just as the ground shifts beneath it.

A few examples from the recent past:

The takeaway is not that any of these were foolish bets at the time. Each addressed a real problem with the tools available. The takeaway is that committing fully to any one architecture is risky, and the systems that hold up are the ones that can let go of yesterday's pattern when a better one arrives.

When a major new model is released, our process compresses to days rather than months.

The existing regression suite runs against the new model unchanged. We get a quantitative comparison across the dimensions that matter for the specific deployment: factual accuracy, citation quality, tone, latency, cost per conversation, refusal rate, tool-call correctness. The result is rarely "better in every way." It is usually a tradeoff matrix: better at technical questions, slightly worse at conversational tone, faster on short prompts, more expensive on long ones. With that matrix in hand, the team decides whether to migrate fully, migrate selectively (route some traffic to the new model and keep others on the previous one), or wait for the next release.

When a new model adds a capability, like native tool use that did not exist before, native voice, longer context that lets us drop a chunking step, agent loops that previously required orchestration code, the team can prototype against it the same week. The regression suite tells us whether the change preserves accuracy. The red-team suite tells us whether the new surface area introduces new vulnerabilities. If both pass, we look for ways to integrate the new feature and the new approach ships. If they do not, the existing implementation stays. When OpenAI released GPT-5 mini, the cost tier upgrade from GPT-4.1 mini looked obvious. Our tests showed the opposite. For our workloads, the new reasoning capabilities chewed up tokens, slowed the pipeline, and delivered little advantage over the existing implementation on tasks that mattered. What works always beats what should work.

When a new prompt engineering technique gets published, or a new retrieval strategy, or a new agent pattern, the experiment runs in a sandboxed branch. The same suite measures the result. Promising approaches get promoted. Approaches that look good in a demo but degrade on real cases get filtered out before they reach a customer.

This is the operating tempo agentic AI rewards. Continuous iteration on the model, the prompts, the tools, and the architecture, with empirical evidence at every step. Solutions stay fresh because they keep absorbing the best of what becomes available.

The discipline that makes this tempo possible is testing. AI components get the same rigor as production code: structured tests, run automatically, blocking deployment on failure.

Accuracy testing asks whether the system produces correct output on inputs where the right answer is known. You assemble a curated set of inputs paired with expected outputs (the ground truth), run them through the system, and measure how often the system gets the answer right.

For a RAG system answering questions about a product catalog, the test set is a fixed list of question-answer pairs validated by domain experts. For an agent making tool calls, it is a set of user requests with the correct sequence of actions known in advance. For a translation pipeline, it is source-target pairs reviewed by native speakers. For a classification step, it is a labeled dataset. We also routinely run a list of known facts about the company, its products and services as part of an initial smoke test.

Accuracy in AI is rarely binary. Many tasks have a range of acceptable outputs, so the test framework needs to score along a gradient: semantic similarity to a reference answer, presence of required facts, absence of disallowed content, conformance to a rubric. PiSrc uses tools like promptfoo, an open-source framework that supports all of these in one place. The choice of tool matters less than the commitment to using one consistently.

Regression testing asks whether the system stays correct after changes. This is the layer that does the most work day to day, because every change to a deployed AI system is a regression risk: a refined prompt, a new tool added to an agent, an updated guardrail, a new retrieval index, a different model. Without a regression suite, the only way to find regressions is in production, often through customer reports.

In conventional software, regression tests run automatically on every commit. The same discipline applies to AI. When PiSrc updates a Prism playbook or refines a Metaphora translation rule, the change runs against the full regression suite before deployment. If accuracy on existing test cases drops, the change goes back for review.

This is what makes model upgrades a workflow rather than a project. The regression suite is the same one that ran for the previous generation. Pointing it at a new model produces an immediate, evidence-based answer to the upgrade question. Prompt engineering becomes the same kind of empirical exercise: a refined system prompt is a hypothesis that the new wording produces better results across the conversations that matter, and the suite settles the question.

Red teaming asks a different question. Where accuracy and regression testing measure expected behavior on known cases, red teaming probes for behavior the system should never produce: outputs from prompt injections, jailbreak attempts, ambiguous instructions, edge cases, attempts to elicit hallucinations, attempts to bypass guardrails, attempts to extract confidential information. The grading criterion is often not "is this output correct" but "is this output safe."

The same machinery extends to domain-required safety behaviors that are not strictly adversarial. In one manufacturing deployment, technical specifications about hazardous materials or electric shock risk should trigger precautionary messages directing the user to confirm details against published documentation. The red-team suite verifies both directions: that the precaution fires when the topic warrants it, and that no phrasing gets a user past the precaution to the raw specification alone.

PiSrc uses promptfoo's red-team capabilities to run adversarial and behavioral scenarios on a regular cadence. The library evolves continuously, drawing from new prompt injection techniques in security research and from specific failure modes our clients flag in their domain. EchoLeak (CVE-2025-32711), disclosed by Aim Labs in June 2025. The technique class, embedding adversarial instructions in upstream content the AI later ingests, generalizes to any RAG pipeline, document processor, or agentic system. When a vulnerability of that kind is disclosed, the corresponding attack pattern enters our testing suite, the same way a bug-fix unit test is registered in conventional software.

This matters especially when adopting new models, because each new model has its own failure profile. A model that resisted one class of injection in the previous generation may be more susceptible to a different class in the next. Red-teaming a new model before it goes to production is non-negotiable, and a mature suite makes that step routine rather than heroic.

The case for adoption is not theoretical. The deployments that fall behind are easy to spot. Engineers avoid changes because consequences are unpredictable. Product teams stop proposing improvements because every change feels risky. The system gets worked around rather than evolved. New features ship outside the AI layer because adding to the AI layer is too expensive. Eventually a competitor ships a noticeably better experience built on a newer foundation, and the only path to parity is to rebuild from scratch.

Test infrastructure is what prevents this. With it, every new release from OpenAI, Anthropic, Google, Meta, or the open-source community is an opportunity rather than a threat. The team can evaluate, decide, and adopt at the cadence of the technology itself, which in this market means continuously.

Most teams already write some informal tests: a few example prompts in a notebook, a spot check after a prompt change, a list of "things to verify" before deployment. The work is not to invent testing from scratch but to formalize what already exists and extend it.

Start with the conversations or workflows that matter most. Pick the top fifty or hundred user inputs your AI handles, document the expected outputs, and turn them into a structured test suite. Run it manually first, automate it next, then add red-team cases as they come up, either from your own threat modeling or from public security research. Wire the suite into your deployment pipeline so changes cannot ship without passing.

Once the suite exists, the next model release becomes a one-day exercise instead of a one-quarter project. That alone changes how an organization relates to the AI roadmap.

The Agentic Enterprise white paper made the case that AI is the operating layer that activates everything else an organization has built. That argument depends on a hidden assumption: that the AI layer can keep evolving as the underlying technology evolves, without forcing a rebuild every time a better option arrives. Without test infrastructure, that assumption is a hope. With it, the assumption becomes an engineering property.

PiSrc deploys AI inside the systems our clients already operate, with governance designed before deployment and resilience designed into the architecture. Test-driven engineering is what gives our clients the confidence to adopt new capability the day it becomes worth adopting, rather than the quarter after a competitor demonstrates the gap. New models will keep arriving. New patterns will keep emerging. Some will become the new standard. Others will fade. The clients who win are the ones whose systems can absorb each of those without rework, because the tests that protect them are part of the system from day one.

Festina caute. In a market moving this fast, that is the winning strategy.